Frankly, I wasn’t sure if discussing the differences and how they can be used was really worth tackling. Is it just a semantics debate among academics? Is there really any substantive difference in their conduct? I’m still not sure it deserves a ton of time picking them apart, but the question comes up at times and I’ve clearly decided to write about it, so, onward!

There are a couple of differences worth pointing out.

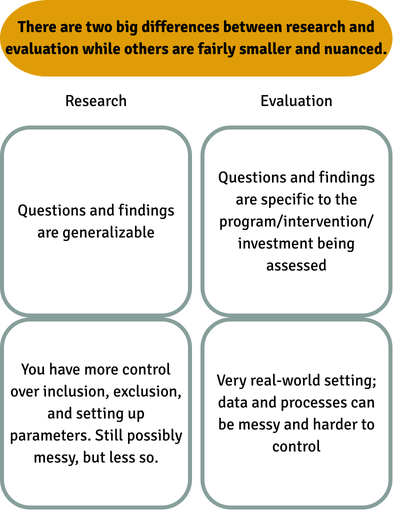

Here’s a quick table for you:

Here’s the explanation a bit more in depth.

1. The first difference lies in the generalizability of the studies.

- There is research, where we would hope to generalize findings or build findings and conclusions from a set of data. Keyword: generalizations.

- And then there’s evaluation, where we’re looking at effectiveness of something specific that has some natural parameters, like a program aiming to serve a defined population. Keyword: specific.

- One typically requires IRB approval (research) and one can be hit or miss in this respect (evaluation).

2. The second difference is a more practical one and lies in the level of control you have over the study parameters. With evaluation you’re working purely in the real-world, where things can be messy. While research can also be pretty messy, certainly, and also very often relies on real-world data, there are usually more variables we control for, include or exclude, which makes things just a bit…different.

Otherwise, any other practical differences are becoming more and more pointless to dissect, parsed by semantics and minute nuance that don’t mean much when things go down in the reality. Especially these days with stronger pushes for more data-driven and data-forward evaluation methods and techniques, even as they’re blended with QA/QI initiatives.

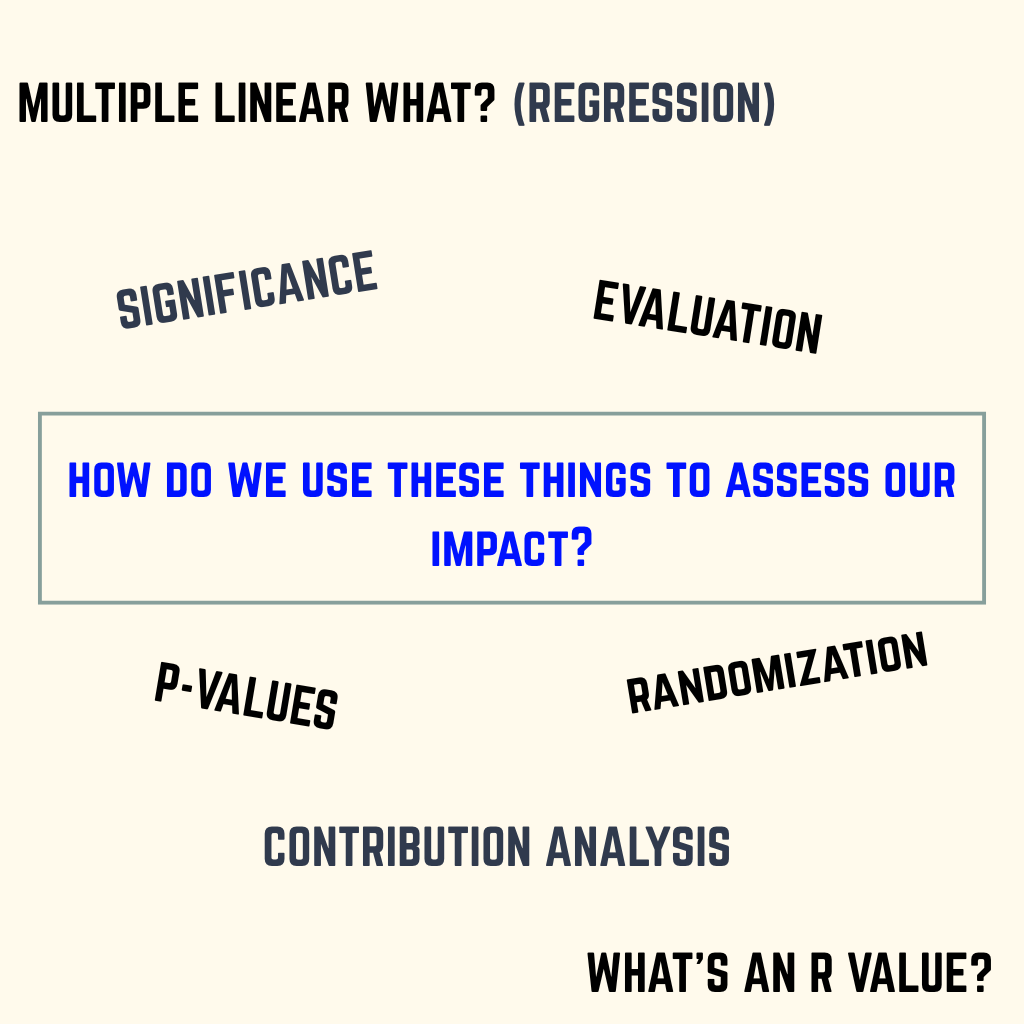

For example, regression analysis is a regression analysis any way you slice it. A well-crafted survey will yield good data regardless of whether you’re calling it’s used for a study, an evaluation, field research, or whatever. The key is that we’re all focused on learning something meaningful, regardless of the terminology, and this focus on learning will mean a healthy use of statistics and other analytical methods.

All of this then begs the question: What do I do to get the information I need to assess my team/program/intervention/fund‘s impact?

Don’t get tied up in the vocabulary. Focus by making a list of the things you want or need to learn and put that into a sentence or two. Share it around, edit it a bit, then let a researcher or evaluator guide you from there.

If you’re looking to do this all yourself or with your team, here are the key steps to develop, use, and apply to get the insights you need (whether we’re calling it M&E, research, IMM, or program CQI).

Let’s dig in:

- Start from a place of good questions. See this article for more on that.

- Make sure someone on your team has a very solid foundation in statistics. If you don’t have that person, you need to find someone external and bring them into the planning.

- What direction are your questions and learning objectives going? Are they retrospective or prospective? Are you looking at a point in time or ongoing events? This will influence the kind of data you access, use, and collect, and the types of analyses you’re able to do with your data. Be sure you share these directionalities when talking about your learnings.

- MAKE. A. PLAN. Literally. Write down the steps and methods of your study, just like the outlines you learned to write in the 5th grade. Be prepared to edit this as needed.

- Consider your data collection tools, capabilities, and who/what you’re collecting data from. What does that look like? Consider technical capabilities and things like internet accessibility. Test these tools and processes. Edit them. Test them again. Then deploy once the bugs are as tamed as they can be.

- Do you have enough power in your data to draw a reasonable conclusion that the results you have are indicative of reality? Make sure you’re sampling is adequate for what you need.

- Are you talking about correlation or causality? If you’re even thinking for a second of using the word causality, did you, or will you, set up an appropriate study or methodology to assess cause and effect? If no, or you have no idea how to tell, abandon that phrase and any of its synonyms right now. You’re looking for the word correlation, instead.

- What do you mean by significant? In statistics, this implies that statistical testing has been done to support the claim that a real change that’s not due to chance has occurred. Did you do this type of testing? If not, don’t use this word, even editorially. State the uncertainty and move on.

- Remember a lot of things will be iterative, so be prepared for revisions to the plan even through the analysis stage.

Keep in mind that many of the basic tenets of good research are also what you need for strong evaluations and impact reporting.

More and more, these more rigorous tenets that were traditionally reserved for research are the level of evidence-based analytical rigor that people want to see in evaluations and impact reporting. There’s a lot of (good, deserved!) focus on analyzing for significant outcomes-based change and impact we’re all striving for.

It’s no longer sufficient to claim impact based on a few summary statistics of outputs or without deeper investigation into outcomes and “the why”. This level of rigor is also what builds trust in your work when you’re able to execute it well and then communicate what you’ve done.

How are you feeling about setting up studies and data collection systems? Do you love it, or is it terrifying and overwhelming? If you’re in the overwhelm camp, I can help you with that.