Besides conjuring images of shoulder pads and ill-fitting pantsuits circa 1994…

quality improvement may also evoke memories of KPIs, questionable employee performance reviews and supervisors past or present conflating coming into the office at 8:34 instead of 8:30 with something that actually matters

Fortunately, CQI has much more to offer those of us doing monitoring and evaluation than this, and can be huge in helping your organization reach its impact goals.

I think it’s important to borrow tools from other fields or sectors. Historically and generally, CQI has been in the realm of “business” and evaluation has been more for the academics, non-profits, governments, and other largely grant-funded institutions.

CQI has skewed towards maximizing efficiency and reducing costs while evaluation has veered towards assessing effectiveness (often still done at the end, and not during, an intervention).

What I like about CQI is that there’s never really an implication that we’re waiting until the intervention or work is over to assess how it’s going. Because it’s rooted in productivity, there’s no incentive to wait.

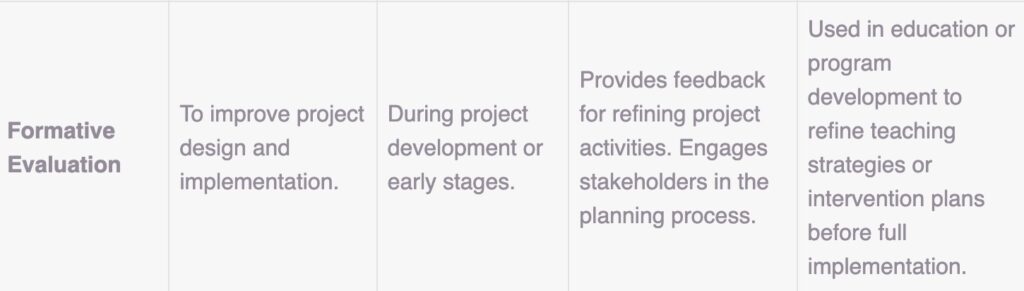

Fortunately, many CQI principles—if not the classic methodologies per se—have found their way into evaluation over the years. Take a look at this definition from Eval Community:

Clearly there is conceptual overlap with CQI here.

Although, I would argue this application extends beyond early stages. I personally prefer blending CQI and evaluation methodology whenever the question(s) at hand aren’t strictly experimental or research-focused.

That’s because in practice, many of us are frequently looking to assess effectiveness and areas for improvement and learning opportunities simultaneously. Our methods should reflect that. For what it’s worth, I often think of the “learning” in the MEL and MERL acronyms as something that happens on some regular cadence.

Additionally, many organizations wanting to evaluate their work are operating on an ongoing basis. They often don’t have resources or time to spare to wait until a defined midterm or final evaluation to course correct. This is a great solution to get more out of your time and effort.

This is how I pull CQI into M&E

A couple of caveats: this is for when we’re not really looking at anything experimental, and are focused on outcomes and/or operations that are ongoing. The intention should be to continuously learn and adapt over the life of the project.

> Follow your usual M&E planning. Start with defining all of your usual monitoring and evaluation components, like goals, objectives, activities, and short- and longer-term outcomes with related benchmarks. Put these into a logic model to organize the information and visualize your theory of change.

> Determine which of these components will be regularly monitored for CQI and what is better suited for an assessment/evaluation over a longer term. Clearly define these in your methodology document that accompanies your logic model. *We all know sustainable change takes time, so focus early stage CQI on refining processes and operations, and seek community feedback on immediate impressions of your work. Adapt the focus of your CQI monitoring as processes become more refined and as it makes sense for improving on the activities that feed your expected short- and long-term outcomes.

> Create feedback loops. This is arguably the most necessary component of good CQI. You have to have a way to share your data and information with stakeholders in order to make any actual improvements. Have a defined cadence for doing this, and make sure the stakeholder review process involves internal, external, and client/patient/community voices. During the review process, compare where you are to your goals, seek feedback on why things may or may not be working well. Consider reviewing operational processes through a process map review. This helps pull everything together to see where processes or activities can be changed to adjust for any shortcomings in the data cycle that’s being reviewed.

> Decide how you will review information within these feedback loops. It helps to establish key questions going into any review, along with the data. These questions should be related to what’s most meaningful to or potentially hindering your work, but can include things like:

- Have we seen the expected improvements?

- Has the observed change been worth the investment so far?

- When reviewing operational and process maps (can be a simple flowchart), where are bottlenecks that are hindering our work?

Think of CQI as your more frequent reviews and tweaks that can help you make meaningful changes before you get to a full scale evaluation of the overall program. This is all part of the learning process, and if we’re not regularly using our data to learn and adjust then what are we collecting it all for? Certainly not to learn how we did 5 years later…